When Creative Is Cheap, Learning Is the Bottleneck

April 15th, 2026

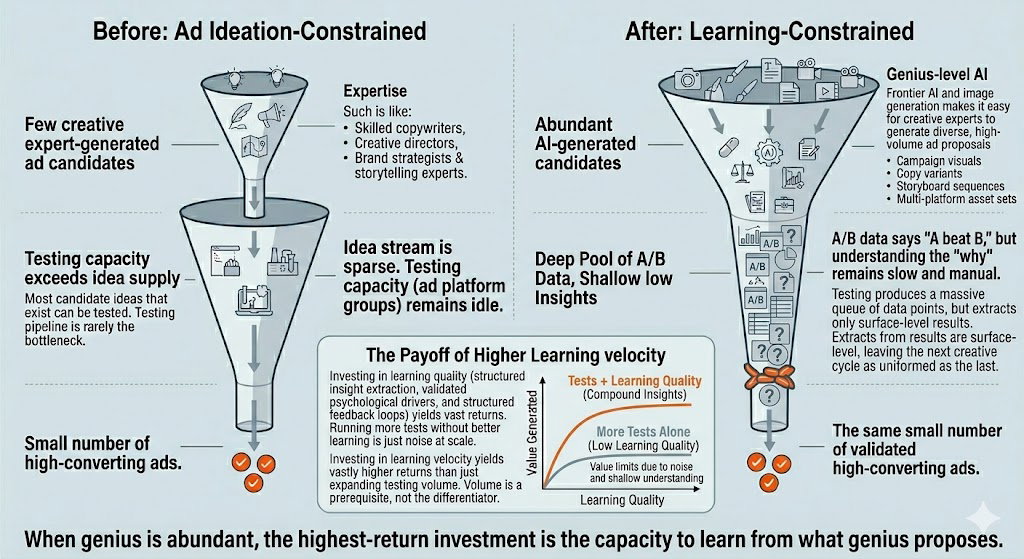

AI tools have allowed ad teams to create more good creative ideas than they can test. That sounds like progress. The problem is that more ideas just moved the bottleneck, from production to learning. The teams that win now are the ones that can run lots of tests and extracting lessons from each across them, compounding their understanding of their customers.

You finally have the ability to make more good ads than you can use. Yay. Also, you have a new problem.

The best ad teams used to be bottlenecked by creative production and ideas. Most of the testing infrastructure, the ad platforms, the audience segments, the analytics dashboards, sat underused, waiting for something worth testing.

That bottleneck has moved. And it's moved somewhere most teams aren't looking.

The Old World: Creative Was the Scarce Resource

Producing a single polished ad variant required a chain of expensive human expertise: concepting, copywriting, art direction, brand review. Teams entered campaign cycles with three to five strong concepts. That's all the pipeline could feed.

The rational investment was obvious: streamline the creative production, because that's where the constraint was. Testing capacity on Meta, Google, and TikTok regularly exceeded the supply of meaningfully different ideas to test. The platforms were ready. The ideas weren't.

What Changed: Creative Became Abundant

The same expert team, armed with current AI tools, can now generate dramatically more high-quality variants. Not garbage. Genuinely differentiated work:

- Dozens of copy angles reframing the same value proposition

- Image variations showing the product in different contexts, moods, and visual styles

- Platform-native asset sets adapted for different formats and audiences

And it's not just AI. Contractors have more bandwidth. Photo shoots now produce 200 images where you'd previously select eight. A freelancer with access to brand guidelines and last month's test results can generate solid options without a formal briefing cycle.

The human expert's role has shifted from producing a handful of polished ideas to curating a large pool of strong candidates. Their taste and strategic judgment become the filter, not the bottleneck.

The Obvious Conclusion (and Why It's Incomplete)

The obvious conclusion is that testing is now the bottleneck. That's partly right, but it misses the deeper problem.

Running more tests without extracting better understanding from them doesn't clear the bottleneck. It moves it one step downstream. A team running fifty two-variant tests and reading "A beat B" each time hasn't solved anything. They've just generated more noise.

The real constraint is learning velocity. How fast can you go from test results to genuine understanding of what worked and why?

What this looks like in practice

- A contractor asks what they should make next. The team has three months of test data pointing at underexplored angles, but no process to turn that into direction. The capacity sits idle or produces low-signal work.

- A photo shoot produces 200 images. A lighter-touch process could turn 40 into testable variants in a day. The limiting factor isn't production. It's knowing how to read what the results will tell you.

- A freelance copywriter generates fifteen headline angles from your experiment results and brand guidelines. But if the last ten tests didn't surface why a headline worked, those fifteen angles are still guesses.

The hidden cost most teams don't see

Two-variant tests tell you which ad won in that context, on that day, against that audience. They don't tell you whether it was the headline, the image, the emotional tone, or something else entirely.

Without that understanding, the next cycle starts from nearly the same level of ignorance. You ran the test. You didn't learn much.

Where the Highest Return Now Lives

More test volume only pays off when you can extract learning from each test. Three things actually move the needle:

1. Learning quality: the scarcest resource in most ad programs right now

Move beyond "variant A beat variant B" to understanding which elements drove the result: the headline, the image framing, the emotional tone, the CTA. Structured tests that isolate variables produce this. Unstructured two-variant tests rarely do.

2. Feedback loops: connecting results back to creation

Test results need to flow directly into the creative process, so each round of new variants is informed by what actually worked, not just brand intuition. In practice, this requires someone to synthesize results into direction and communicate that clearly to the people generating the next batch of ideas. Most teams don't have a reliable process for this step.

3. Throughput: but only as a multiplier

Running more concurrent experiments, shorter test windows, faster rotation of variants. This matters, but only as a multiplier on learning quality. Faster noise is still noise.

The Bottom Line

The team that wins is not the one with the most ads or even the most tests. It's the one that learns fastest and compounds those learnings into the next cycle.

When the supply of good creative ideas is no longer the constraint, the scarce and valuable resource becomes the ability to learn from testing them. Running more tests without extracting better understanding is not an upgrade. It's the same bottleneck in a new location.

"The highest-return investment is no longer in generating ideas. It's in the infrastructure, processes, and analytical capability to understand what your tests are actually telling you."